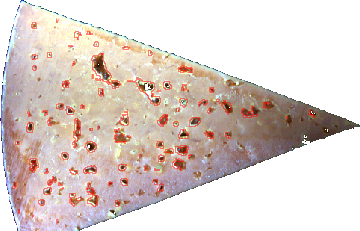

Identify objects by analyzing local spatial contrast and structural gradients at a specific spectral band. This method is highly effective for noisy data, using a multi-step pipeline of noise reduction and edge detection to isolate features based on texture rather than absolute spectral values.

The Structure Segmentation method identifies objects by analyzing spatial texture and local contrast differences at a specific spectral band. Unlike model-based segmentation (e.g., PCA), which relies on the absolute spectral signature of a pixel, Structure Segmentation identifies "edges" and "topographic changes" in the data.

This makes it exceptionally robust for noisy data or environments with inconsistent lighting, as it focuses on how a pixel differs from its immediate neighbors rather than its specific value.

How it Works

The process follows a linear pipeline:

-

Band Extraction: A single grayscale image is created from the selected band.

-

Median Filtering: A noise-reduction filter is applied to remove "salt and pepper" noise while preserving important edges.

-

Derivative Calculation: The algorithm calculates the maximum difference (gradient) between pixels within a local window. This highlights "structures" like holes, cracks, or edges.

-

Binarization: A threshold is applied to the derivative map to create a binary mask (object vs. background).

-

Hole Filling: Small gaps within detected objects are automatically filled to ensure solid segments.

Parameters

Band

Specify the band number (wavelength) to use for analysis.

tip Choose the band where the object has the highest visual contrast against the background.

Median kernel

The size of the noise-reduction filter (e.g., 3, 5, or 7 pixels).

Increase this if your raw data is very "grainy" or noisy.

Derivate kernel

The window size used to calculate local contrast. A smaller kernel (3–5) detects sharp, fine details; a larger kernel (7+) is better for broader, softer structures.

It is generally best to use an odd number (like 3 or 5). An even number like 2 lacks a true central pixel, which causes the calculation to shift or "gravitate" toward the origin rather than staying centered. In image processing, odd-sized kernels are standard to ensure the resulting segmentation stays more aligned with your features - both are however possible to use and will produce results.

Threshold

The sensitivity level for detection. Lower values pick up subtle textures; higher values require a sharper contrast/edge to trigger a detection.

Min area

The minimum number of pixels an object must contain to be included in the results. Used to filter out small noise artifacts.

Max area

The maximum number of pixels allowed for an object. Used to exclude the background or large connected components that aren't of interest.

If 0 no maximum area is defined.

Object filter

Use an expression to further exclude unwanted objects based on shape.

Properties that can be used for the Expression:

-

Area -

Length -

Width -

Circumference -

Regularity -

Roundness -

Angle -

D1 -

D2 -

X -

Y -

MaxBorderDistance -

BoundingBoxArea

For details on each available property see: Object properties Details

Shrink

Takes away

numbers of pixels at the borders of the objects included in images.

Separate

-

Normal

-

Can have both separated and combined objects.

-

-

Separate adjacent objects

-

All objects are defined separately.

-

-

Merge all objects into one

-

All objects are defined as one.

-

-

Merge all objects per row

-

All objects per row segmentation are defined as one.

-

-

Merge all objects per column

-

All objects per column segmentation are defined as one.

-

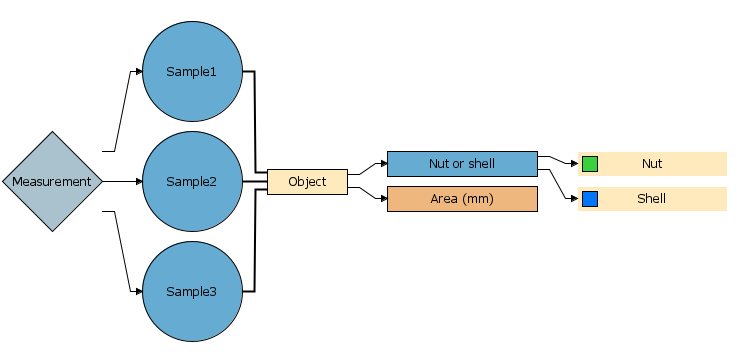

Link

Only visible when applicable

Link output objects from two or more segmentations to top segmentation. Descriptors can then be added to the common object output and will be calculated for objects from all segmentations.

The segmentations must be at same level to be available for linking.